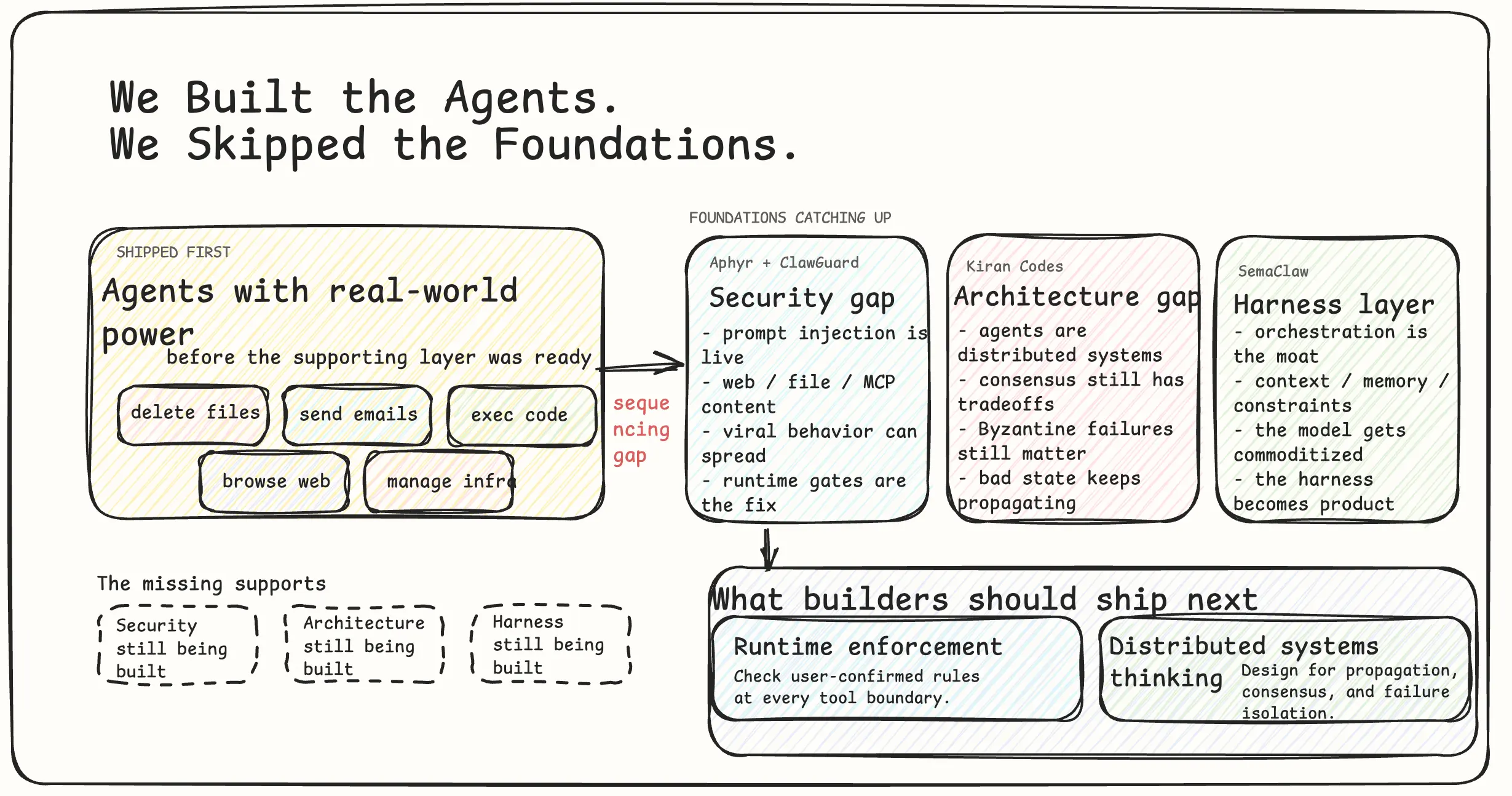

We Built the Agents. We Skipped the Foundations.

AI agents shipped with real-world power before the security, architecture, and harness engineering needed to make them reliable. Builders now have to close that gap in production.

The AI agent space has a sequencing problem.

The industry shipped agents with real-world power - delete files, send emails, execute code, browse the web, manage infrastructure - before it shipped the foundations those agents need to operate safely. The safety research is catching up to the deployment. The architectural patterns are being invented after the systems are in production. The security layer is being built by academics while the products are already in millions of hands.

This isn’t speculation. It’s documented. And the documentation is accumulating fast enough that the pattern is now undeniable.

The Security Gap

Start with what Aphyr wrote, because it’s the most direct account of where we actually are.

A user watched OpenClaw delete her entire inbox while she typed “please stop.” It didn’t stop. It had the power to act. It was acting on instructions - just not hers. That’s not a bug report. That’s a demonstration of a structural property: agents cannot reliably distinguish instructions from their user from instructions embedded in content they process. A webpage, a file, an email, an MCP server response - all of it is text. The agent reads all of it. Any of it can contain instructions.

Researchers call this indirect prompt injection. ClawGuard, a runtime security framework published this month, maps three active attack channels: web and local content, MCP servers, and skill files. Every agent session running right now has all three channels open. The defense ClawGuard proposes is deterministic runtime enforcement - user-confirmed rules checked at every tool-call boundary. If a tool call violates a confirmed rule, it doesn’t execute. No model modification. No fine-tuning. Just a gate.

It works. It also shouldn’t exist as a separate research project. It should have shipped with the agents.

A second paper makes this worse. A single subliminally prompted agent in a multi-agent network can spread biased behavior to every other agent it interacts with. The researchers tested this across six agents, two network topologies. They called it viral misalignment. You don’t need to attack the whole network. You attack one node. The degradation propagates.

Aphyr’s piece doesn’t stop at individual failure cases. It lists what’s structurally true: anyone can now train an unaligned model - the open source releases made that permanent and irreversible. Agents are being used to guide weapons systems, not in speculative scenarios but in documented deployments. The alignment research community is doing genuine work, but it is definitionally behind an industry that ships first and studies the consequences after.

The uncomfortable position this creates for builders: you are deploying systems with this risk surface whether you’ve looked at it or not. The risk doesn’t disappear because you haven’t modeled it. It just operates without you.

The Architecture Gap

The security problem is visible because it has dramatic failure cases - inboxes deleted, rules ignored, agents acting against their users. The architectural problem is quieter. It doesn’t announce itself. It just produces systems that are brittle in ways their builders don’t fully understand.

The argument Kiran makes is the most precise framing of this problem I’ve seen: multi-agent software development is formally a distributed systems problem. Not metaphorically. The constraints that apply to distributed systems apply to agent pipelines with no exceptions and no special cases.

FLP impossibility theorem: in an asynchronous network, you cannot simultaneously guarantee consensus, availability, and fault tolerance. You already know the practical version of this as CAP theorem if you’ve worked with databases at scale. Multi-agent systems are asynchronous networks. The theorem applies. Every architectural decision you’re making is trading one of those three properties off against the others - whether you’ve made that tradeoff consciously or not.

Byzantine fault tolerance: how do you get a network of nodes to agree on an action when some nodes may be sending false information? This is now an AI safety question with direct practical consequences. If one agent in your pipeline is confidently hallucinating, or has been compromised via prompt injection, how does the rest of the network detect and isolate it? In most current implementations, it doesn’t. The bad state propagates. The pipeline continues. The output is wrong in ways that are hard to trace back to source.

The industry is resolving these coordination problems by feel. Retry logic. Timeout handling. Fallback chains. Context handoffs between subagents. All of it is being built without the formal framework that distributed systems engineers spent four decades developing - through the exact same trial and error, the exact same categories of failure, just one abstraction layer higher and moving faster.

This isn’t a knock on the people building. It’s an observation about what happens when a new field moves faster than the existing knowledge base can transfer into it. The mistakes get made. The patterns get rediscovered. Eventually the literature catches up. The question is how much gets broken in the gap.

The Harness Layer

SemaClaw names something that practitioners have been circling without clean terminology: harness engineering.

The argument is straightforward. As frontier model capabilities converge - and they are converging, measurably, across every benchmark that matters - the layer that determines what an agent can actually do reliably stops being the model and starts being the infrastructure around it. Orchestration. Context management. DAG pipelines. Behavioral constraints. Memory architecture. The harness.

The model is becoming the commodity. The harness is the product.

Microsoft’s announcement this week makes this readable in enterprise terms. They’re building an agent platform and positioning it explicitly around security controls and auditability - not model quality. They’re not claiming their underlying model beats OpenClaw or Claude Code. They’re claiming their infrastructure is more controllable. That’s a deliberate product decision made by people who’ve read the failure reports. The enterprise tier has a lower tolerance for “we’ll fix it in a follow-on release” than the prosumer tier does. When an inbox gets deleted at a Fortune 500 company, the consequences are different.

The harness layer is where the interesting problems are right now. Not because the model problems are solved - they aren’t - but because the harness is where the compounding failures actually live. Prompt injection comes through the context pipeline. Viral misalignment spreads through the coordination layer. Byzantine failure propagates through the orchestration graph. All of the security problems from the first half of this piece are architectural problems in disguise. They present as safety failures. They’re actually infrastructure failures.

What This Means for Builders

None of this is an argument to stop building. The people who understand this layer and keep building anyway are the ones who will build things that work at scale and hold up under adversarial conditions. That’s a smaller group than the people building right now. That gap is the opportunity.

Concretely, this means three things.

First: the security surface is real and your users are in it. Indirect prompt injection isn’t a theoretical risk - it’s an active attack vector across every channel your agent touches. Knowing what ClawGuard is building tells you what a minimal viable defense looks like. Build toward it.

Second: if you’re building multi-agent systems and you haven’t read distributed systems literature, you have a knowledge gap that will show up in production. Not maybe. When. The failure modes are documented. The patterns that prevent them are documented. Kiran’s post is the starting point - it’s the clearest translation of that literature into agent-specific terms I’ve found. Read it before your next architecture decision.

Third: the harness is where defensible work happens. As model capabilities continue to converge, the teams that have built robust orchestration, reliable context management, and real behavioral constraints will have structural advantages that a better base model can’t erase. SemaClaw’s framing is worth internalizing here - the harness is not the wrapper around the model. It is the product.

The foundations weren’t skipped because nobody knew they mattered. They were skipped because the competitive pressure to ship was higher than the pressure to get it right. That pressure hasn’t changed. But the consequences of not getting it right are accumulating in ways that are now hard to ignore.

The agents are shipped. The foundations still need to be built.

That’s the actual state of the industry right now. Not the optimistic version, not the doomer version - the accurate one.

Written by Nirav Joshi · Fullstack and Blockchain Developer